Latest interview on our site series with AAAI/SIGAI Doctoral Consortium Participant features Shiming Wen Who is looking for transparent and trustworthy AI systems. We found out more about her work, her experience as a trainee researcher, and what inspired her to study AI.

Tell us a little about your PhD – where do you study and what is your research topic?

I am a PhD candidate in Information Science at Drexel University in Philadelphia. My research is about making AI systems more transparent and trustworthy. Right now, language models can give you answers that seem pretty confident, but there’s no easy way to verify whether those answers are actually correct or where they come from. I’m working on building models that can show their reasoning and point to the evidence behind their outputs – so people can actually trust what AI tells them, especially in areas like healthcare and legal document review.

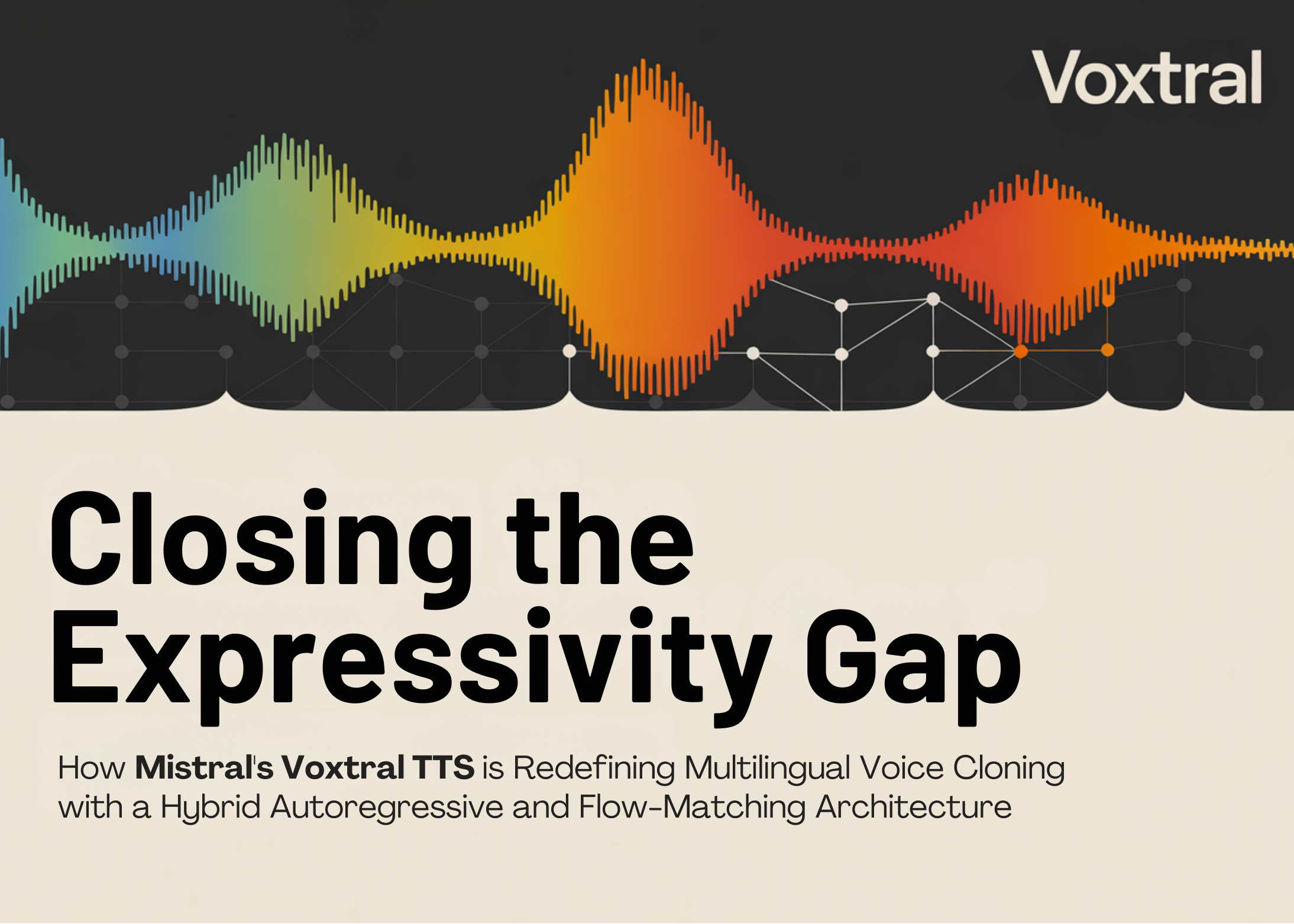

Illustration of the typical structure of text classification.

Illustration of the typical structure of text classification.

Can you give us an overview of the research you have done so far during your PhD?

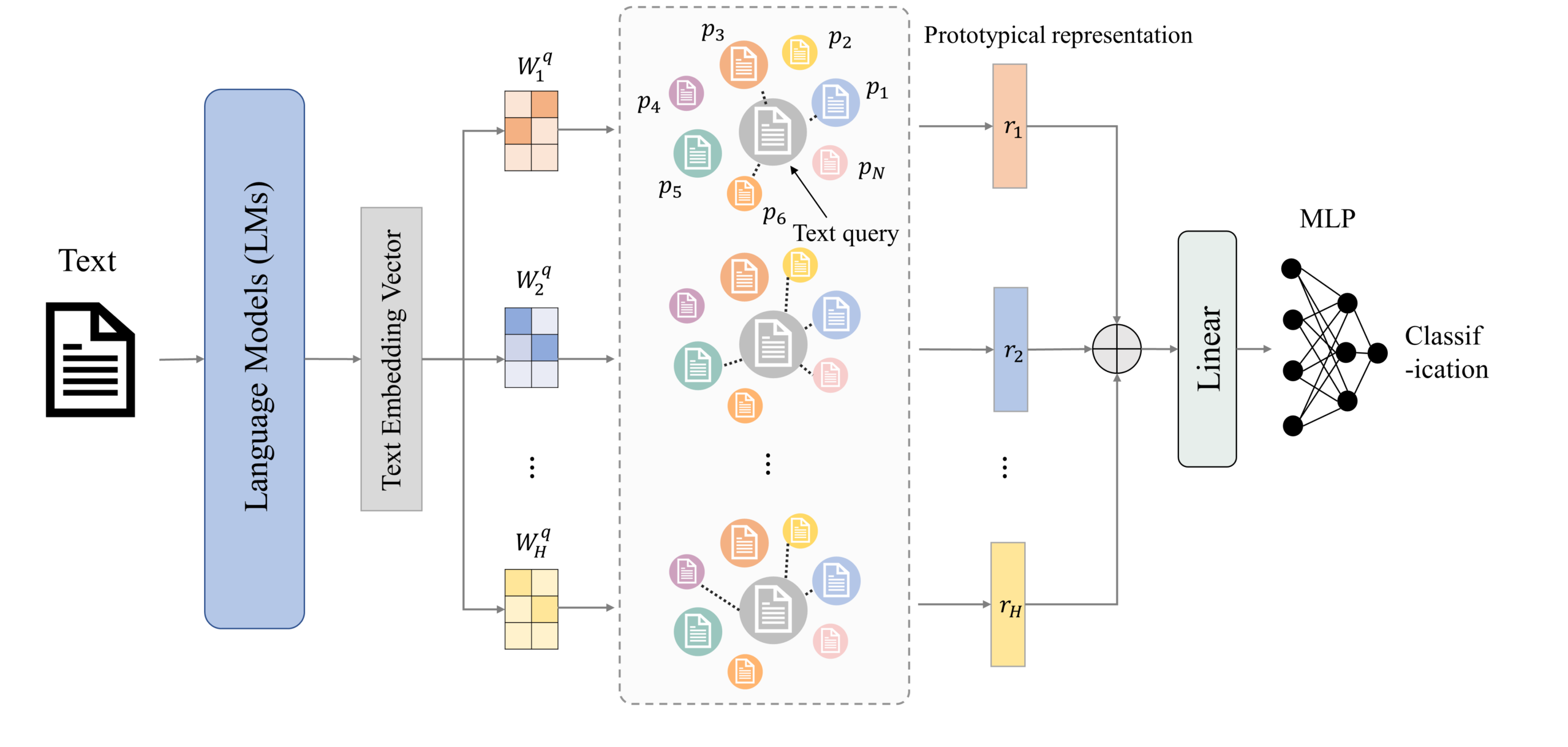

I started my PhD with a question: Can we make interpretable models that are actually good enough to use in practice? Previous interpretable models have always lagged behind black box models in terms of accuracy, which has made them difficult to adopt in practice. I’ve developed a prototype-based approach that bridges this gap – the model explains its decisions by showing similar examples that it has learned from, without losing performance. From there, I expanded this idea to include generative models – exploring whether the model could not only give you an answer, but also show you exactly where it found that answer. Along the way, I’ve also applied these ideas to medical AI, creating interpretable diagnostic tools that work even with very limited training data.

Screenshot of our iOS app running pneumothorax (PTX) diagnosis on a 12.9-inch Apple iPad Pro.

Screenshot of our iOS app running pneumothorax (PTX) diagnosis on a 12.9-inch Apple iPad Pro.

Is there an aspect of your research that was particularly interesting?

Definitely a spatial grounding action. There was a moment when I redesigned how the model learned spatial coordinates, and the accuracy jumped from about 65% to over 85%. The original loss function was essentially blind to small regions in documents, so the model learned to ignore them. Once I made a massive loss, everything changed. It was a powerful reminder that how you teach the model is just as important as the model itself – which is, in fact, the basic idea behind my entire post.

What are your plans to build on your research so far during your PhD – what areas will you be researching next?

So far, my research has shown that we can make linguistic models more transparent—through prototype-based reasoning for categorization and spatial basis for answering document questions—without giving up performance. The next natural step is to push these ideas forward. I want to bring prototype-based interpretability to larger generative models. Most prototype-based approaches today only work for classification, and extending this type of case-based reasoning to generative models remains a major open challenge. One direction I’m exploring is analyzing how different layers of the model encode different types of knowledge, and using that structure to build richer and more nuanced interpretations of the model’s output. I’m also exploring how to make the AI alignment process itself more transparent, by incorporating prototype-based heuristics into reward models. The idea is that if we can explain why a reward model favors one response over another, we can build safer and more trustworthy AI systems.

Can you tell us about your research experience at Samsung and Amazon?

At Samsung Research America in Mountain View, I worked as an NLP Research Intern on the Language Intelligence team. It tackled a problem that seemed simple but turned out to be very challenging: Can an AI read a complex document, answer a question about it, and indicate exactly where it found the answer? Think of a doctor reviewing a 20-page medical report – he wants the AI to not only say “patient has condition X,” but also to see the exact paragraph that supports that conclusion. I have developed new training methods that teach the model to understand spatial relationships between coordinates in a document, dramatically improving its ability to accurately determine answers. This work was accepted into ACL this year.

During my summer internship at Amazon as an applied scientist, I worked on a different but related problem: producing AI output that people can actually understand and trust. The Amazon marketplace contains millions of products across thousands of categories, and each category needs a clear definition that accurately covers everything within it. Previously, these definitions were written by hand – a process that takes weeks and still cannot keep up with the pace of new products and emerging categories. I’ve built a system that automatically generates these definitions, and it actually outperforms human-generated definitions in terms of accuracy and clarity. To me, it was a compelling example of the potential of AI: when a task involves aggregating information across millions of items, AI can actually produce more accurate and consistent results than manual effort — as long as the output is designed to be clear and trustworthy.

Looking back, both experiments reinforced the same lesson: building strong AI is not enough. If people can’t understand or verify what the model is telling them, the technology is not reaching its full potential.

What prompted you to study artificial intelligence?

My journey began at the end of my university studies, when I was working on my final year project. I trained a simple neural network on MNIST — a basic dataset made up of handwritten numbers — and was amazed that such a small model could achieve over 95% accuracy. That moment sparked something in me: If a simple network can understand an image, can it understand human language? Is it possible to have real conversations with people? I became really excited to pursue this question. With the advent of GPT and large language models, much of what once seemed like science fiction has become reality. But as these systems become more powerful, I find myself asking a new question: Can we make them secure and trustworthy enough for people to truly rely on them? I believe this is the key to AI reaching its full potential, and this is what drives my research today.

Finally, what do you enjoy doing outside of your PhD?

I try to get out as much as possible. I love walking along the river in Fairmont Park in Philadelphia and watching the sunset after a long day at work, riding the slopes in the Pocono Mountains in the winter, or just kayaking and floating on the river in the summer. Nature is the best way to recharge my energy, it keeps me balanced mentally and physically.

About Shiming

|

Shiming Wen She is a PhD candidate in Information Science at Drexel University, where her research focuses on making linguistic models more interpretable and trustworthy. Her work extends interpretable prototype-based models for text classification and spatially based structures to answer document questions. She has published at venues including ACL, COLING, and AAAI, and has contributed to federally funded research projects supported by NIH and DARPA. She has also gained industrial research experience at Samsung Research America, Amazon, and Ping A Technology. Outside of research, she enjoys kayaking, skiing, and exploring nature around Philadelphia. |

Tags: AAAI, AAAI Doctoral Consortium, AAAI2026, ACM CJ

Lucy Smith is Senior Editorial Director at AIhub.