We’re excited to announce day one support for NVIDIA Nemotron 3 Nano Omni on Clarifai. Available now on Clarifai inference engineNano Omni delivers fast multi-modal thinking to developers building agent systems, delivering throughput of over 400 tokens per second.

NVIDIA Nemotron 3 Nano Omni is a 30B A3B multimedia thinking model designed for workloads that include documents, images, video and audio. With a 256KB context window and support for text, image, video, and audio input with text output, it gives developers a single model for handling rich multimedia context within a proxy workflow.

This makes it well-suited for sub-agents in workflows where multimedia understanding and speed must go together.

A multimodal model for specialized subagents

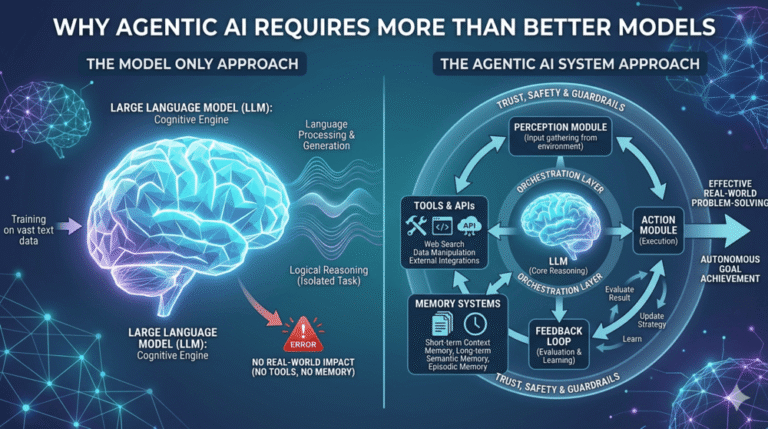

As agent systems become more capable, they also become more specialized. Different models and components handle planning, execution, retrieval, and verification, each working within a broader workflow. In this architecture, a model that handles multimodal inputs must do more than just process isolated inputs. They must interpret multiple modalities together, maintain context across steps, and respond quickly enough to stay within the operational loop.

As a lightweight multimedia model for sub-agents, the Nemotron 3 Nano Omni can reason across screens, documents, charts, audio and video without routing each method through a separate package. Instead of dividing vision, speech, and language across multiple models, it gives developers a more unified way to approach multimodal thinking while keeping the overall system manageable.

Designed for computer, document, audio and visual reasoning

Nano Omni is particularly relevant to the types of workloads that have become central to enterprise proxy systems.

For computer use, agents need to read interfaces, track the state of the user interface over time, and verify that actions are completed as expected. For document intelligence, they need to reason across text, tables, charts, screenshots, scanned pages, and mixed visual structure in the same pipeline. For audio and video workflows, they must correlate what was said, what was shown, and what changed over time.

These are all cases where multimodal capability must work reliably in production, with a model that can handle multiple modalities efficiently without splitting the workflow across separate models.

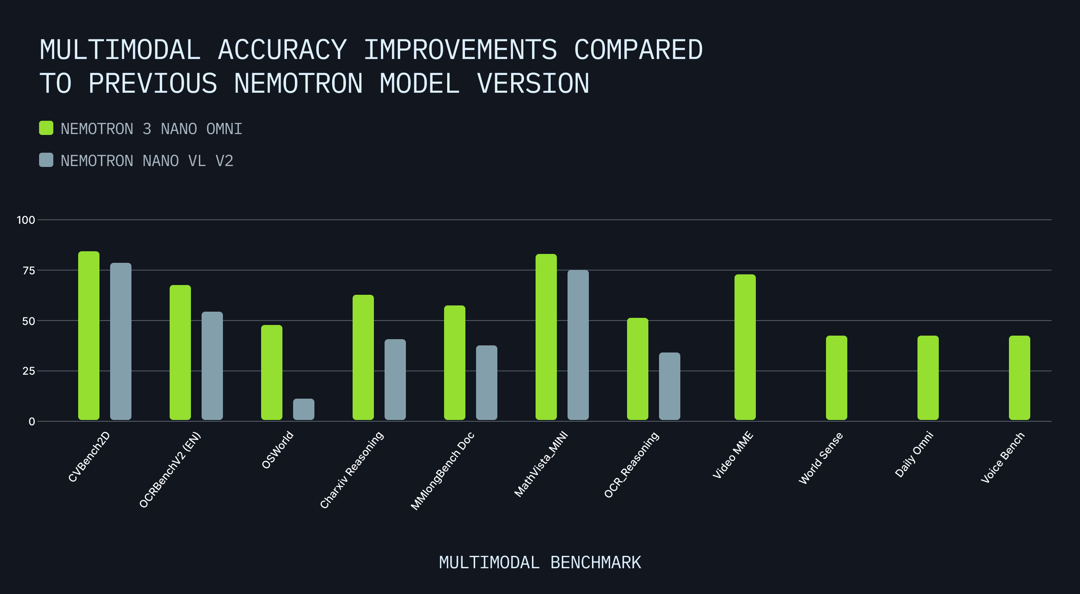

The model represents a significant leap in capabilities compared to previous models in the Nemotron family. The significant improvement in benchmarks such as OCRBenchV2, OCR_Reasoning, MathVista_MINI, and OSWorld reflects the improved performance of the model relative to the real workloads that agents are likely to serve today.

This is where the Nano Omni naturally fits in, giving developers a single multimedia stream for the tasks that sub-agents are increasingly expected to handle.

Agent-friendly token economy

In agent systems, sub-agents handle repetitive tasks across documents, screens, audio, and video within a larger workflow. Each call adds to the cost, throughput, and infrastructure requirements of the system as a whole. NVIDIA Nemotron 3 Nano Omni integrates vision, speech, and language into a single multimodal model, reducing inference leaps, coordination logic, and synchronization across models compared to separate perception stacks.

Nano Omni delivers approximately 2x higher throughput on average, along with approximately 2.5x less compute for video inference through temporal awareness and efficient video sampling. For multimedia agent workflows, this means higher throughput and reduced compute load without adding complexity to the stack.

The model uses a hybrid expert architecture with Transformer-Mamba design, combined with 3D convolutional layers and efficient video sampling for temporal and video inputs. It can run on a single H100, H200 or B200, making it practical to deploy multimedia subagents without expanding infrastructure requirements.

High-throughput inference on Clarifai

On the Clarifai Reasoning Engine, NVIDIA Nemotron 3 Nano Omni runs at over 400 characters per second, giving developers the throughput needed for multimedia production agent workflows. This is important in systems where subagents are frequently called to process documents, interfaces, audio, and video as part of an ongoing workflow.

The Clarifai Reasoning Engine is designed to accelerate inference by combining optimized kernels, speculative decoding, and adaptive performance techniques to improve the throughput of reasoning models without compromising accuracy.

Getting started with Clarifai

Developers can experience the NVIDIA Nemotron 3 Nano Omni in Clarifai Playground and can also access it via an OpenAI-compatible API, facilitating integration into existing agent applications, tools and frameworks.

For large-scale or more controlled deployments, Clarifai provides a direct pipeline to production using Compute Orchestration. Developers can run Nano Omni on Clarifai Reasoning Engine or deploy it across their own cloud, VPC, on-premises, or air-tight environments while managing deployments through a unified control plane.

NVIDIA Nemotron 3 Nano Omni is available on Clarify today.

If you have any questions about accessing NVIDIA Nemotron 3 Nano Omni on Clarifai, join our Sedition.

(function(d, s, id) {

var js, fjs = d.getElementsByTagName(s)(0);

if (d.getElementById(id)) return;

js = d.createElement(s); js.id = id;

js.src = “//connect.facebook.net/en_GB/sdk.js#xfbml=1&version=v3.0”;

fjs.parentNode.insertBefore(js, fjs);

}(document, ‘script’, ‘facebook-jssdk’));

![How AI Can Build a Future that Works for Everyone [MAICON 2026] 3 Featured Image](https://smartaiblog.online/wp-content/uploads/2026/05/1778369441_How-AI-Can-Build-a-Future-that-Works-for-Everyone-768x384.png)