March 20, 2026

4 minutes read

By Cogito Tech.

680 views

The rapid adoption of AI in medicine has not only presented new technical possibilities, but has also led to increased scrutiny by regulatory bodies responsible for safety and clinical effectiveness. Oversight by institutions such as the FDA and European authorities operating under the Medical Device Regulation (MDR) framework has transformed compliance into a structured, evidence-based process rather than a late-stage formality.

Regulatory approval is no longer just a technical checkpoint on a device roadmap; It is a critical moment that determines whether an innovation can translate into real clinical impact.

Why robust models still fail to be validated

Launching an AI-enabled software as a medical device (SaMD) means proving to regulators that your system is not only accurate, but safe, reliable, and clinically meaningful in its intended use.

However, even robust algorithms do not succeed at the regulatory stage, only to discover that their training and validation datasets lack the depth of documentation, demographic representativeness, traceability, and compliance infrastructure needed to withstand regulatory scrutiny.

At Cogito Tech, our board-certified multidisciplinary team supports AI developers with compliant, traceable, and clinically proven annotated datasets that comply with FDA, HIPAA, and global regulatory expectations across healthcare settings.

Key data-centric challenges in regulatory submissions

Here are the key data challenges that even advanced AI projects face — and how Cogito Tech’s Medical Innovation Center addresses them.

Audit-ready data and annotation infrastructure

Organizers treat data set readiness as a key element of submission. They require comprehensive traceability and data provenance, including digital audit trails that demonstrate:

- Which explained each data point

- When the changes were made

- How versions of the dataset were controlled

Custom tools, spreadsheets, or loosely managed pipelines rarely meet standards like 21 CFR Part 11’s requirements for electronic records and audit trails.

Transparent regimental design and justice documentation

Organizers are moving beyond robust performance metrics to demand transparent collective design and documented evidence of justice. Teams should clearly define validation sets, including inclusion and exclusion criteria. Old or poorly sourced datasets rarely meet this criterion.

Often, a large number of training datasets do not contain basic demographic descriptive data (eg, age, gender, race/ethnicity), which limits the ability to assess bias or clinical generalizability. Public analyzes of AI/ML-enabled devices have shown persistent reporting gaps regarding demographic transparency, increasing the regulatory focus on demographic inclusion.

Drift control in advanced artificial intelligence systems

Model drift, resulting from shifts in real-world operational data, can erode model performance over time, raising safety concerns if continuous monitoring, performance auditing, and retraining are not maintained rigorously. Additionally, retraining models on new datasets often results in mandatory revalidation and regulatory resubmission under existing frameworks – a requirement that many AI labs fail to anticipate during early development.

Compounding the issue is that guidance documents, such as those from the Medical Device Coordination Group (MDCG Frameworks), provide evolving but still limited paths to fully autonomous or continuous learning AI systems. As a result, important updates to models are often treated as new controlled versions.

The primary challenge is to establish robust lifecycle governance that remains consistently aligned with regulatory expectations.

Data quality, explainability and interoperability as regulatory gatekeepers

Poor data quality is one of the most important regulatory barriers to AI systems. Regulators require documented evidence of the following:

- Accuracy and completeness

- representation

- Mitigating bias

- Clinical significance

- The source of the data can be traced

Poor documentation, inconsistent nomenclature, and fragmented data formats increase scrutiny and undermine the defensibility of a submission.

Therefore, AI systems must embed strong data governance, lineage tracking, interoperability standards, and transparent documentation into the training data pipeline from the beginning.

How Cogito Tech turns organizational complexity into competitive advantage

Validated, 21 CFR Part 11 – Data Governance and Traceability

Through DataSum, our proprietary Nutrition Facts-style framework, Cogito Tech provides structured and transparent documentation of data set quality, composition, and management.

The framework complies with requirements such as 21 CFR Part 11 for electronic records and audit trails. HIPAA-compliant, FDA-ready workflows replace ad hoc processes with controlled, audit-compliant infrastructure.

By managing the full lifecycle – from pre-labeling and quality control to auditing and version tracking – we ensure end-to-end traceability, clear data provenance, and defensible submission readiness.

Documented cohort representation and validation of defensible justice

Through its global network of multidisciplinary medical experts, Cogito Tech benchmarks and validates datasets across specialties and geographies.

This enhances the group’s credibility across diverse clinical settings and patient groups while enabling:

- Transparent demographic representation

- Documenting structured inclusion/exclusion

- Validation of defensible justice

- Alignment with evolving regulatory expectations

Lifecycle control and change management

Cogito Tech mitigates model drift and regulatory risk with FDA-ready workflows and Part 11 CFR compliant processes that ensure structured documentation, traceability, and audit-readiness across the AI lifecycle.

Our Innovation Center supports:

- Continuous monitoring of the data set

- Controlled retraining documents

- Release tracking and performance measurement

- Support structured revalidation

This infrastructure simplifies change management, reduces friction in resubmission, and ensures that AI systems remain stable in performance and compatible as they evolve.

Traceable, standards-compliant data integrity and interoperability

DataSum enhances source documentation and lineage tracking to support regulatory submissions, including FDA 510(k) pathways where applicable.

Comprehensive workflows—which include acquisition, curation, annotation, validation, and auditing—ensure accuracy, completeness, and demographic representativeness across modalities.

Support for formats such as NRRD, NIfTI, DICOM, and multimedia clinical datasets enhances interoperability and readiness for submission.

Together, these capabilities integrate structured governance, bias control, and traceable documentation directly into the training data pipeline – aligning AI development with regulatory expectations from the beginning.

Create scalable data, monitor bias, and externally validate it

Leveraging a wide range of clinical annotations, Cogito Tech scales training data generation, labeling, and quality assurance services while incorporating regulatory safeguards against sampling bias, spectrum bias, and demographic underrepresentation. Through multi-center, multi-geographical cohort sourcing and expert-led validation, we ensure that datasets reflect real-world clinical diversity and intended populations.

Our approach enables:

- Develop a diverse, multi-centered portfolio

- Demographic balance across patient subgroups

- Sampling and spectral bias mitigation

- Independent external validation across healthcare organizations, regions and time frames

- Compliance with FDA and EU standards for universality and fairness

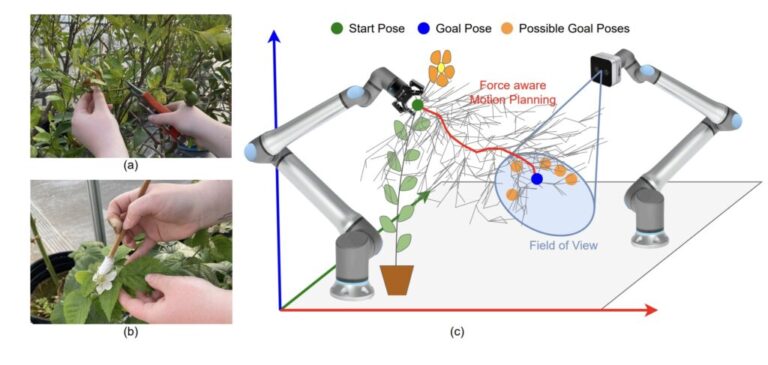

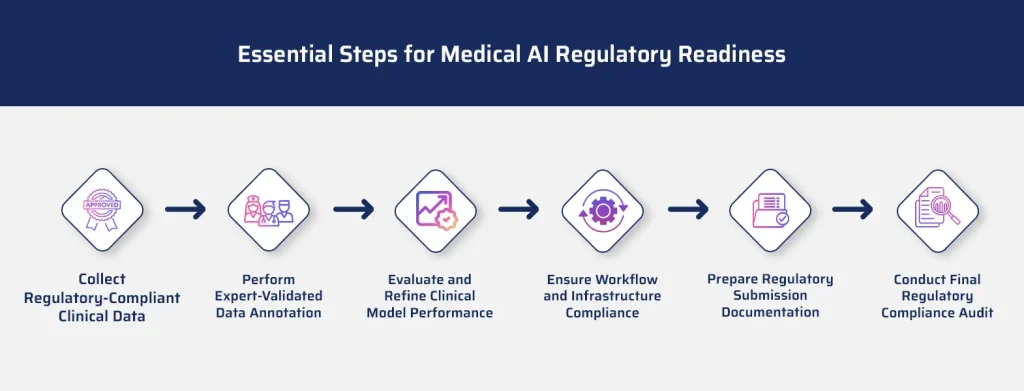

A step-by-step guide to preparing AI models for submission to the FDA and FDA

AI developers need to take the following steps when creating AI, machine learning, or CV models for healthcare organizations and MedTech companies that require FDA approval to deploy the model:

- Collect or create HIPAA and FDA compliant multimedia medical datasets.

- Label data accurately. Naming accuracy is more important in healthcare than in other industries.

- Integrate medical expert review into the data pipeline for quality control and validation.

- Include a clear and robust audit trail at the FDA level.

- Test models and optimize data to improve performance

conclusion

Regulatory approval for AI-powered medical systems is no longer achieved by model performance alone. It requires structured governance, defensible data quality, transparent collective design, and ongoing lifecycle documentation consistent with frameworks such as the US Food and Drug Administration (FDA) and MDR.

Cogito Tech includes it compliance Right into the data lifecycle, transforming training and validation datasets into audit-ready organizational assets. Through 21 CFR Part 11 compliant traceability, clinically validated annotation pipelines, expert-led group governance, and consistently maintained documentation, we reduce submission risk and enhance technical filings for FDA 510(k), De Novo, and MDR pathways.

For AI innovators in healthcare and medical technology, regulatory readiness should not be a late-stage patch. With Cogito Tech, it’s a built-in competitive advantage from day one.

(tags for translation) AI Medical