Get started now with privacy focused VPN by Proton!

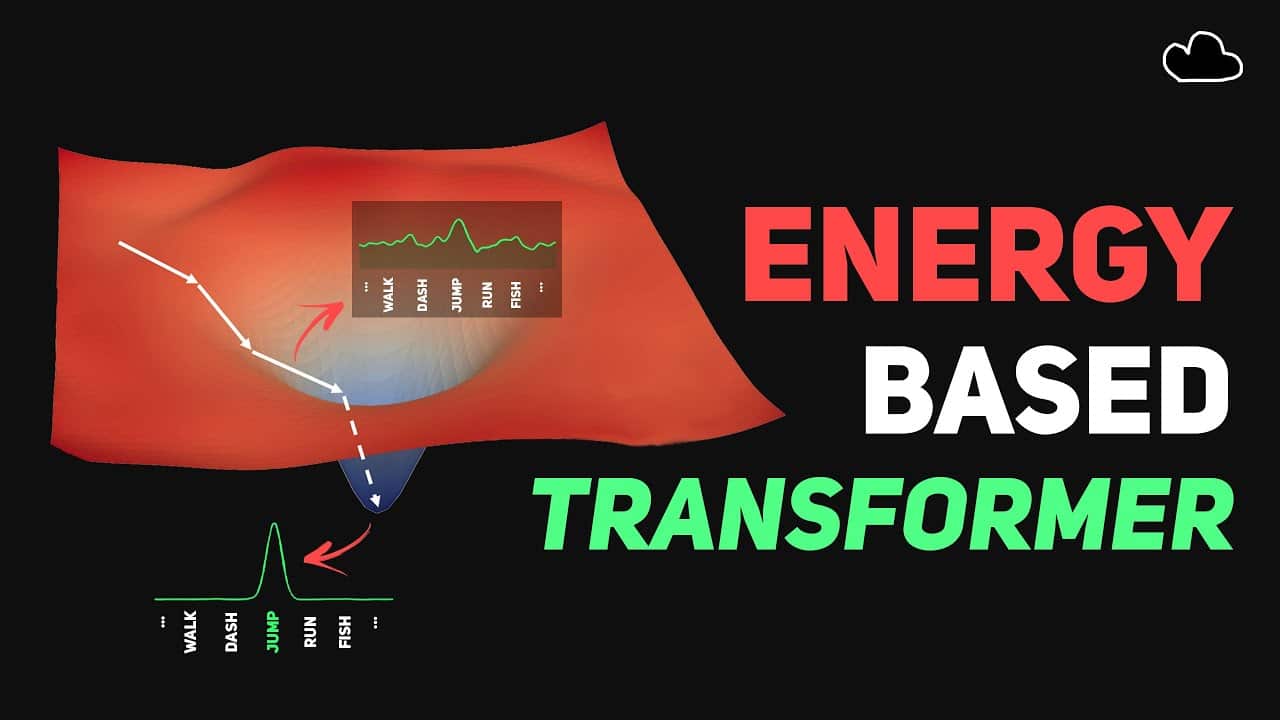

Energy-Based Models (EBMs) aren’t new—they score how “good” a (context, answer) pair is with an energy (lower = better). Energy-Based Transformers (EBT) simply apply that classic EBM idea as the transformer’s objective: for each guess, take a few “downhill” steps to lower the energy. This turns inference into quick search + self-checking: try a few candidates, keep the lowest-energy one, and spend extra steps only on hard cases. So it’s not a new architecture, it’s just a new objective!

My Newsletter

my project: find, discover & explain AI research semantically

My Patreon

Energy-Based Transformers are Scalable Learners and Thinkers

[Paper]

Try out my new fav place to learn how to code

This video is supported by the kind Patrons & YouTube Members:

🙏Nous Research, Chris LeDoux, Ben Shaener, DX Research Group, Poof N’ Inu, Andrew Lescelius, Deagan, Robert Zawiasa, Ryszard Warzocha, Tobe2d, Louis Muk, Akkusativ, Kevin Tai, Mark Buckler, NO U, Tony Jimenez, Ângelo Fonseca, jiye, Anushka, Asad Dhamani, Binnie Yiu, Calvin Yan, Clayton Ford, Diego Silva, Etrotta, Gonzalo Fidalgo, Handenon, Hector, Jake Disco very, Michael Brenner, Nilly K, OlegWock, Daddy Wen, Shuhong Chen, Sid_Cipher, Stefan Lorenz, Sup, tantan assawade, Thipok Tham, Thomas Di Martino, Thomas Lin, Richárd Nagyfi, Paperboy, mika, Leo, Berhane-Meskel, Kadhai Pesalam, mayssam, Bill Mangrum, nyaa,

Toru Mon

[Discord]

[Twitter]

[Patreon]

[Business Inquiries] bycloud@smoothmedia.co

[Profile & Banner Art]

[Video Editor] @Booga04

[Ko-fi]