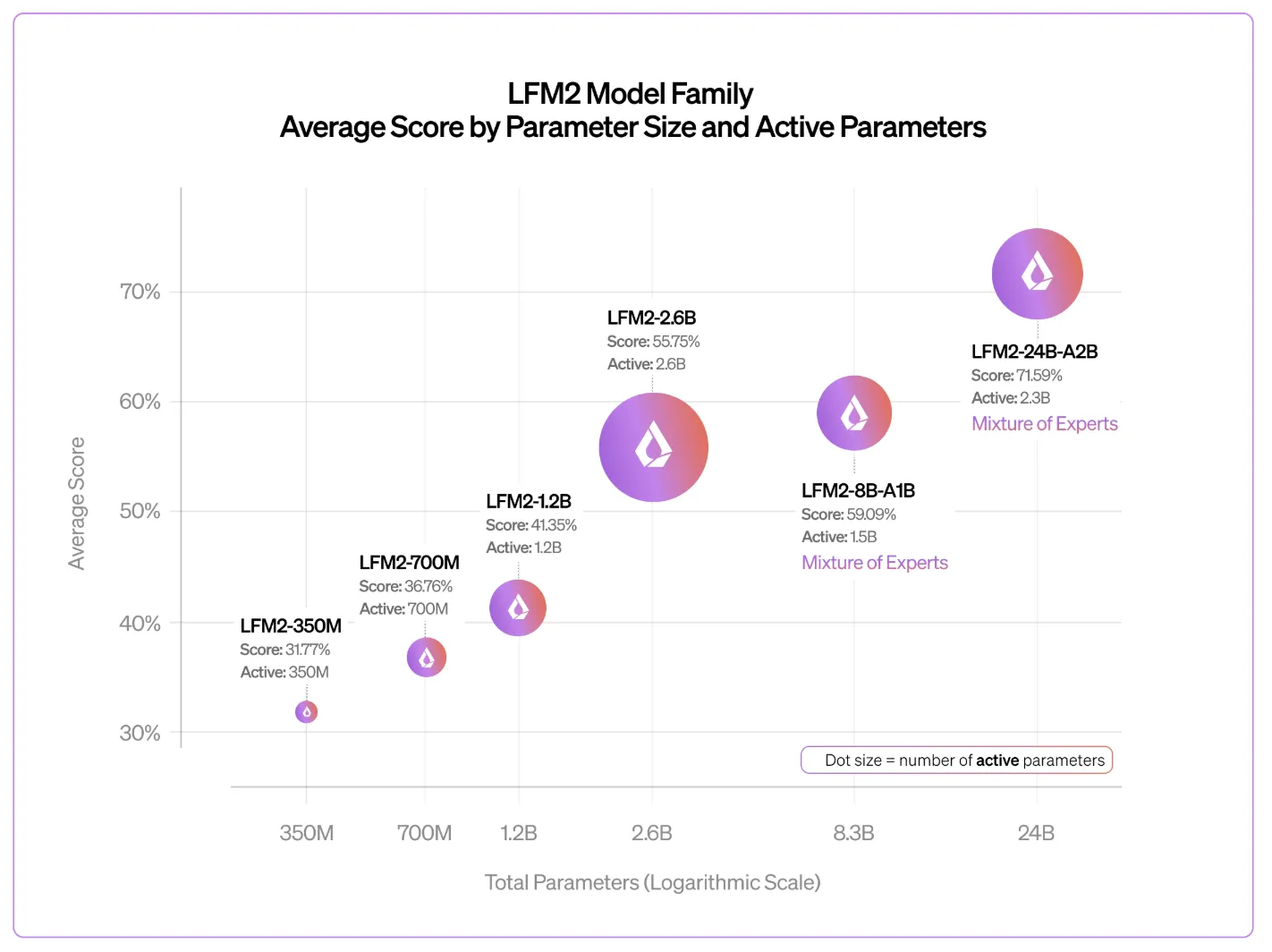

The generative AI race has long been a game of “bigger is better.” But as the industry reaches the limits of power consumption and memory bottlenecks, the conversation is shifting from raw parameter counts to architectural efficiency. The Liquid AI team is leading the charge with a release LFM2-24B-A2Ba 24 billion-parameter model that redefines what we should expect from edge-capable AI.

A2B architecture: 1:3 efficiency ratio

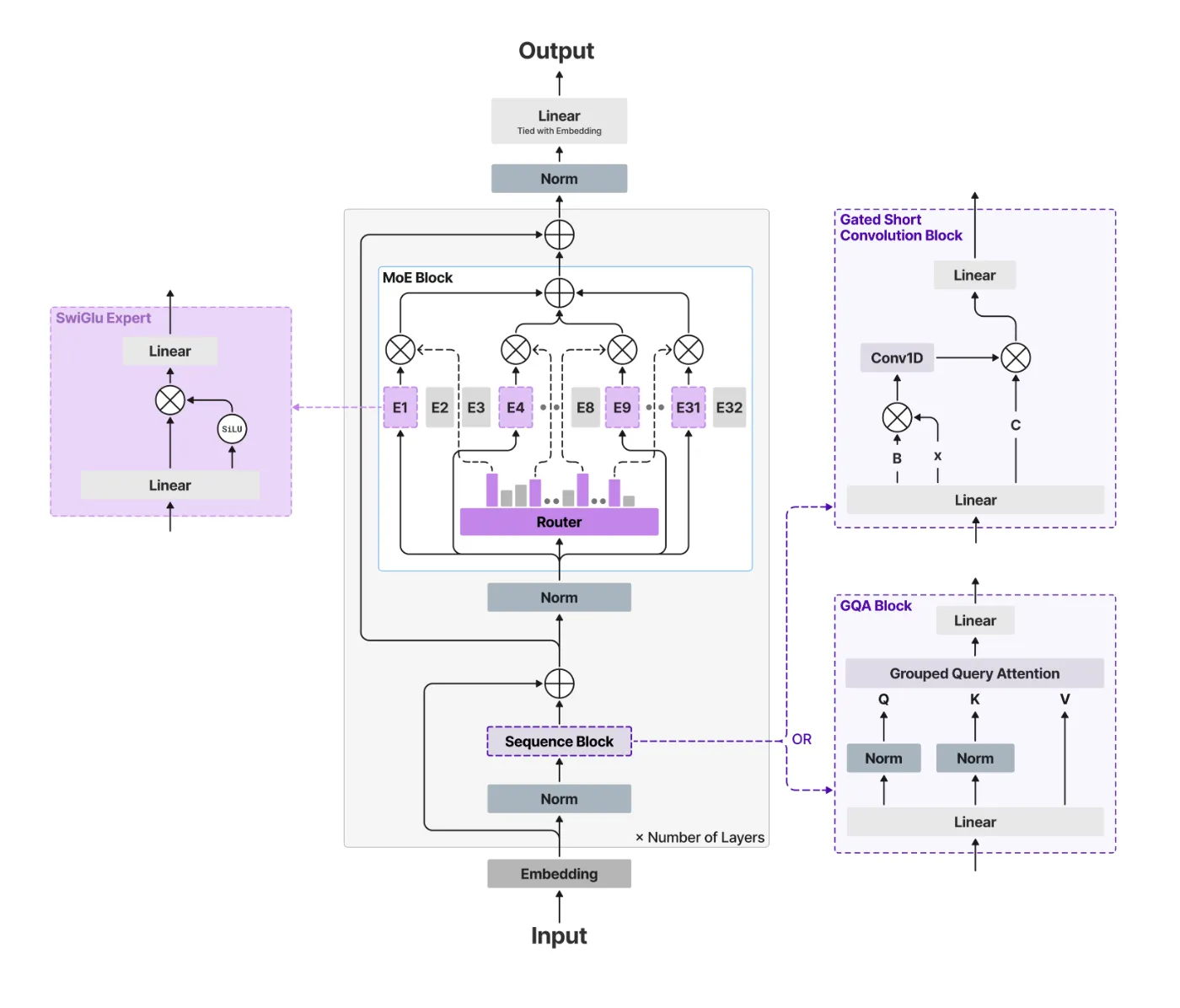

The letter “A2B” in the model name stands for ” Pay attention to the rule. In a conventional switch, each layer uses Softmax Attention, which is measured as O(N) squared2)) with the length of the sequence. This results in a huge key-value (KV) cache that eats up VRAM.

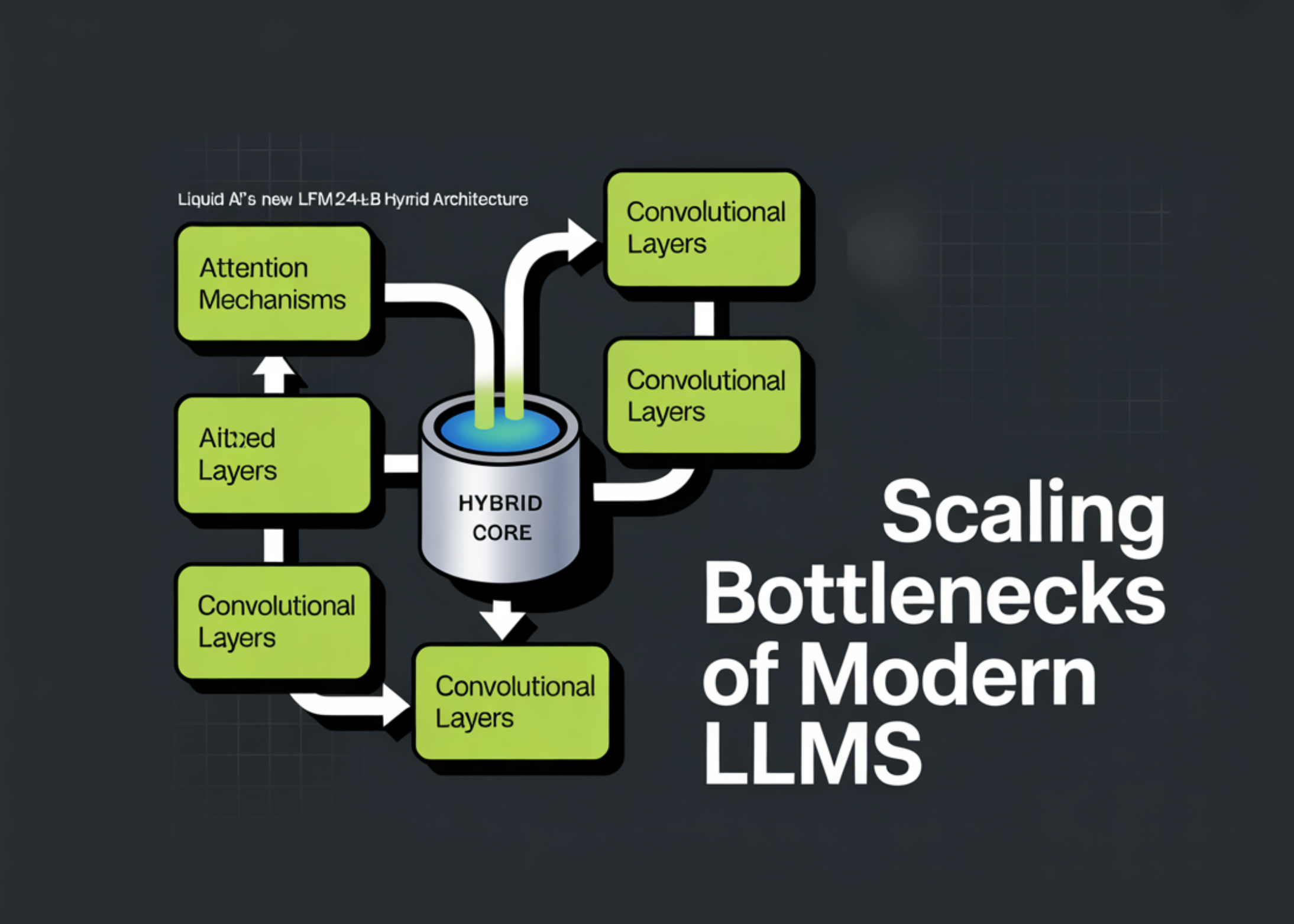

The Liquid AI team goes beyond this by using a hybrid architecture. the ‘a base“Classes are effective Short gated convolutional blockswhile ‘attentionLayers are used Gathered Query Attention (GQA).

In the LFM2-24B-A2B configuration, the model uses a 1:3 ratio:

- Total layers: 40

- Torsion blocks: 30

- Attention blocks: 10

By interspersing a small number of GQA blocks with a majority of gated convolution layers, the model maintains high-accuracy transformer retrieval and inference while maintaining the fast prepacking and low memory footprint of the linear complexity model.

Miscellaneous Ministry of Education: 24 billion intelligence services with a budget of 2 billion

The most important thing about the LFM2-24B-A2B is that Mix of experts (Ministry of Education) design. Although the model contains 24 billion parameters, it is only activated 2.3 billion parameters For each symbol.

This is a game changer for publishing. Since the active parameter path is very weak, the model can fit it 32 GB of RAM. This means it can run natively on high-end consumer laptops, desktops with integrated graphics processing units (iGPUs), and dedicated NPUs without the need for a data center-class A100 chip. It effectively provides the knowledge density of the 24B model with the inference speed and energy efficiency of the 2B model.

Standards: punching

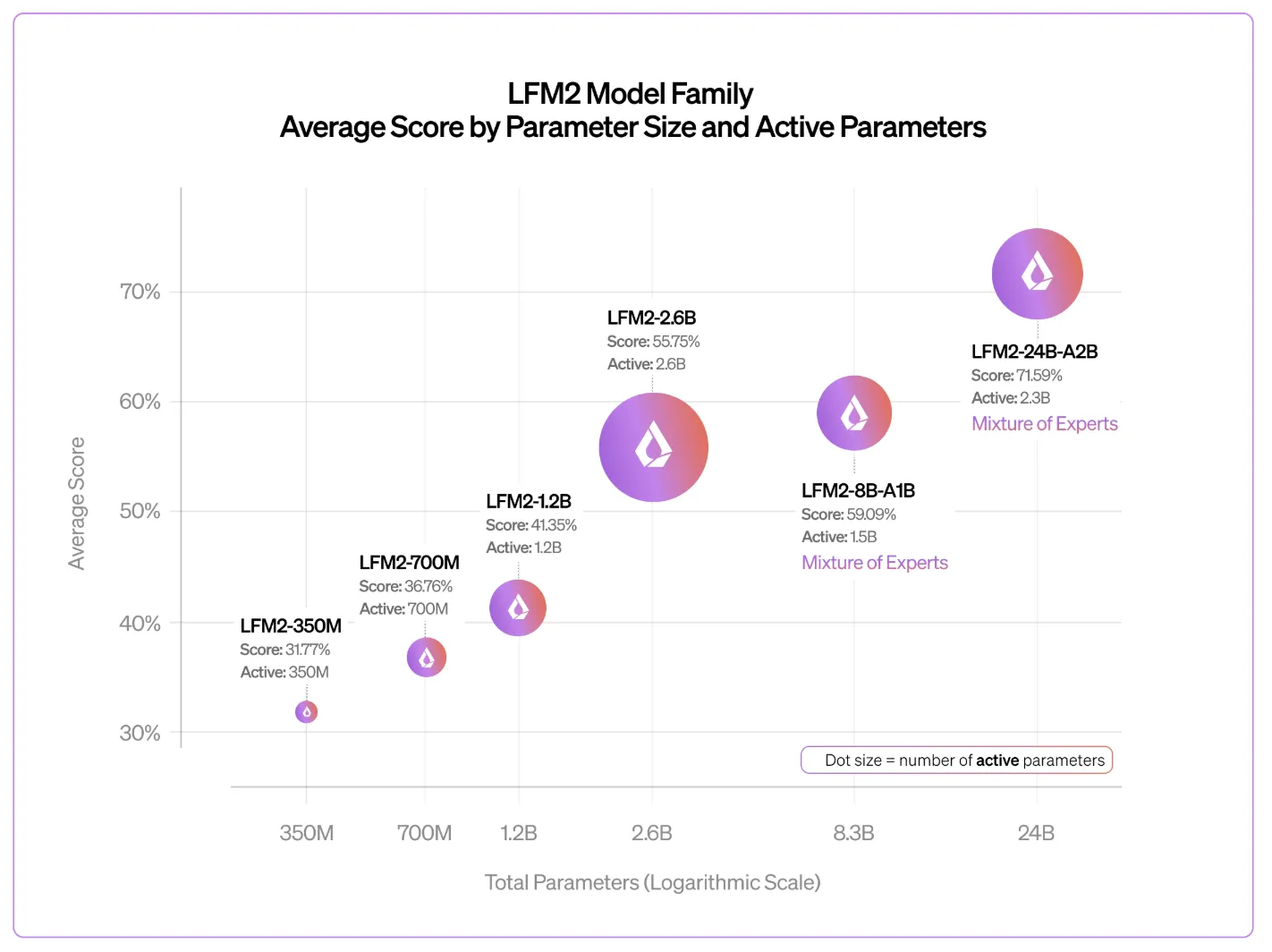

The Liquid AI team reports that the LFM2 family follows predictable, linear scaling behavior. Despite the small number of active parameters, the 24B-A2B consistently outperforms larger competitors.

- Logic and reason: In tests such as GSM8K and Mathematics-500It competes with dense models twice its size.

- yield: When measured on NVIDIA H100 One using vLLMIt arrived Total 26.8 thousand symbols per second At 1,024 concurrent requests, significantly surpassing Snowflake’s gpt-oss-20b and Qwen3-30B-A3B.

- Long context: Model A features 32 thousand Token context window, optimized for privacy-sensitive RAG (Retrieval Augmented Generation) pipelines and local document analysis.

Technical cheat sheet

| ownership | specification |

| Total parameters | 24 billion |

| Active parameters | 2.3 billion |

| Build | Hybrid (gated conversion + GQA) |

| Layers | 40 (30 rules / 10 attention) |

| Context length | 32,768 icons |

| Training data | 17 trillion tokens |

| license | LFM Open License v1.0 |

| Native support | llama.cpp, vLLM, SGLang, MLX |

Key takeaways

- A2B hybrid architecture: The model uses a ratio of 1:3 Gathered Query Attention (GQA) to Short gated detours. By utilizing linear complexity “core” layers for 30 out of the 40 layers, the model achieves much faster prepacking and decoding speeds with significantly reduced memory footprint compared to traditional full-attention switchers.

- Efficiency of the Ministry of Education’s Miscellaneous: Although there is 24 billion total parametersOnly the form is activated 2.3 billion parameters For each symbol. This “sparse mixture of experts” design allows for the reasoning depth of a large model while maintaining the inference latency and energy efficiency of a 2B parameter model.

- True Edge Capacity: The model has been optimized through research into in-ring hardware architecture and is designed to fit this model 32 GB of RAM. This makes it fully deployable on consumer hardware, including laptops with integrated GPUs and NPUs, without the need for expensive data center infrastructure.

- Advanced performance: The LFM2-24B-A2B outperforms larger competitors such as Qwen3-30B-A3B and snowflake gpt-oss-20b In productivity. Benchmarks show that it hits approx 26.8 thousand symbols per second on a single H100, showing near-linear scaling and high efficiency on even long-context tasks Window nominal 32k.

verify Technical details and Typical weights. Also, feel free to follow us on twitter Don’t forget to join us 120k+ ml SubReddit And subscribe to Our newsletter. I am waiting! Are you on telegram? Now you can join us on Telegram too.