This blog post focuses on new features and improvements. For a comprehensive list, including bug fixes, please see Release notes.

Large-scale LLM inference typically involves deploying multiple replicas of the same model behind a load balancer. The standard approach treats these replicas as interchangeable and routes requests randomly or periodically through them.

But LLM’s conclusion is not stateless. Each replica creates a KV cache of precomputed states of interest. When a request arrives at a replica without the relevant context already cached, the model has to recalculate everything from scratch. This wastes GPU cycles and increases latency.

The problem becomes visible in three common patterns: shared system prompts (every application has one), RAG pipelines (users query the same knowledge base), and multi-turn conversations (follow-up messages share context). In all three cases, a simple load balancer forces the replicas to compute the same prefixes independently, multiplying the overhead by the number of replicas.

Clarifai 12.3 offers KV cache-aware routingwhich automatically detects instantaneous interference across requests and routes them to the replica that is most likely to have the relevant context already cached. This provides significantly higher throughput and less time to first token with no configuration required.

This version also includes Warm knot baths To scale faster and failover, Session-aware routing To keep user requests on the same replica, Prediction caching For identical inputs, and Illustration skills For AI coding assistants.

KV cache-aware routing

When deploying an LLM with multiple replicas, standard load balancing distributes requests evenly across all replicas. This works well for stateless applications, but LLM heuristics have a state: the KV cache.

The KV cache stores pre-computed key-value pairs from the attention mechanism. When a new request shares context with a previous request, the model can reuse these cached calculations instead of recalculating them. This makes inference faster and more efficient.

But if your load balancer does not take cache state into account, requests will spread randomly across replicas. Each replica ends up recalculating the same context independently, wasting GPU resources.

Three common patterns where this matters

Common system demands She is the clearest example. Each application contains system instructions that precede user messages. When 100 users access the same model, the random load balancer distributes them across replicas, forcing each user to calculate the same router prefix independently. If you have 5 replicas, you count this router 5 times instead of once.

RAG pipelines Exaggerate the problem. Users who query the same knowledge base get near-identical retrieved document prefixes entered into their claims. Without cache-aware routing, this shared context is recalculated on each replica rather than reused. Overlap can be significant, especially when many users ask related questions within a short period of time.

Multi-turn conversations Create implicit cache dependencies. Follow-up messages in the conversation share all of the previous context. If the second message arrives at a different replica than the first, the entire conversation history must be reprocessed. It gets worse as the conversations grow longer.

How the account format solves it

Clarifai Compute Orchestration analyzes incoming requests, detects immediate interference, and routes them to the replica that is most likely to already have the relevant KV cache loaded.

The routing layer identifies shared prefixes and directs traffic to replicas where that context is already warm. This happens transparently at the platform level. You cannot configure cache keys, manage sessions, or modify your application code.

The result is significantly higher throughput and less time to issue the first token. GPU utilization improves because replicas spend less time on redundant calculations. Users see faster responses because requests arrive at replicas that are already equipped with the relevant context.

This improvement is automatically available in any multi-version deployment of supported vLLM or SGLang models. No configuration required. No code changes needed.

Warm knot baths

GPU cold starts occur when deployments need to scale beyond their current capacity. Typical sequence: Provision a cloud node (1-5 minutes), pull the container image, download the model weights, load into GPU memory, then make the first request.

session min_replicas ≥ 1 Keeps the baseline capacity always warm. But when traffic exceeds this baseline or a failover occurs on a secondary node cluster, you still experience infrastructure provisioning delays.

Warm node pools keep the GPU infrastructure prewarmed and ready to accept workloads.

How it works

Common GPU instance types have ready nodes, ready to accept workloads without waiting for the cloud provider to provision. When your deployment needs to scale, the node is already there.

When your primary node pool approaches capacity, Clarifai automatically begins preparing the next priority node pool before traffic flows. By the time the overflow occurs, the infrastructure is ready.

Warm capacity is maintained using lightweight placeholder workloads that are immediately discarded when the real model needs the GPU. Your model gets resources immediately without competing for scheduling.

This eliminates the infrastructure provisioning step (1-5 minutes). The container image and model weight loading are still pulled when starting a new replica, but when combined with Clarifai’s pre-built base images and optimized model loading, scaling delays are dramatically reduced.

Session-aware routing and predictive caching

In addition to KV cache affinity, Clarifai 12.3 includes two additional routing optimizations that work together to improve performance.

Session-aware routing It keeps user requests on the same replica throughout the session. This is especially useful for conversational applications where follow-up messages share from the same user context. Instead of relying on KV cache affinity to detect interference, session-aware routing ensures persistence by routing based on user or session IDs.

This works without any changes on the client side. The platform handles session tracking automatically and ensures that requests with the same session ID reach the same replica, preserving the KV cache area.

Prediction caching It stores results for matching sets of inputs, models, and versions. When the same request arrives, the cached result is returned immediately without calling the form.

This is useful for scenarios where multiple users submit identical queries. For example, in a customer support application where users repeatedly ask the same questions, caching the prediction completely eliminates redundant inference calls.

Both features are enabled automatically. You cannot configure cache policies or manage session state. The routing layer handles this transparently.

Illustration skills

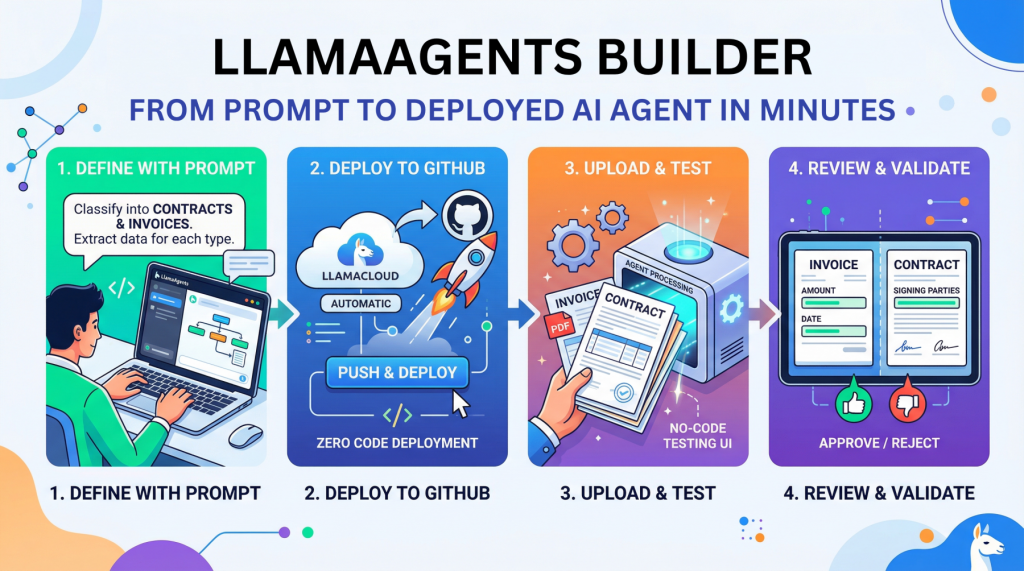

We’re launching Clarifai Skills that turns AI programming assistants like Claude Code into experts on the Clarifai platform. Instead of explaining APIs from scratch, you can describe what you want in plain language and your assistant will find the right skill and get to work.

Clarifai Skills is based on the open agent skills standard, and works across 30+ agent platforms including Claude Code, Cursor, GitHub Copilot, and Gemini. Each skill includes detailed reference documentation and examples of working codes.

Available skills cover the full platform: CLI commands (clarifai-cli), publish the form (clarifai-model-upload), inference (clarifai-inference), MCP server development (clarifai-mcp), publishing life cycle management (clarifai-deployment-lifecycle), the possibility of observation (clarifai-observability), and more.

Installation is straightforward:

Once installed, skills are automatically activated when your request matches their description. Ask normally (“Deploy Qwen3-0.6B with vLLM”) and your assistant will generate the correct code using Clarifai’s APIs and conventions.

Complete documentation, installation instructions and examples here.

Additional changes

Python SDK updates

Serve and publish the model

the clarifai model deploy It now includes multi-cloud GPU discovery and instant deployment flow. The simplified config.yaml structure for form configuration makes it easy to get started.

clarifai model serve It now reuses existing resources as they become available instead of creating new ones. Submitted forms are private by default. Added --keep Marker to keep construction manual after rendering, useful for debugging and checking construction elements.

Local Runner is now public by default. Models launched via the local runner are generally accessible without manually adjusting the sight.

Typical runner

Added VLLMOpenAIModelClass Parent class with built-in override support and health checks for vLLM-supported models.

Improve model running memory and access time. Reduce memory footprint and improve response time in the form launcher. Simplify the overhead in the SSE stream (events sent from the server).

Automatic detection and clamp max_tokens. The runner now automatically detects the backend max_seq_len And the clamps max_tokens to that value, preventing out-of-range errors.

Bug fixes

Fixed tracking and flow of inference model code in Agents class. Tracking tokens for inference models now correctly calculates inference tokens. Fixed security of event loop, flow and tool call traversal in proxy class.

Fixed user/application context conflict in the CLI. Resolving conflicts between user_id and app_id When using named contexts in CLI commands.

Pinned clarifai model init Handling the guide. The command now correctly updates an existing model directory instead of creating a subdirectory.

Are you ready to start building?

KV Cache-Aware routing is now available in all multi-instance deployments. Deploy a model that has multiple replicas and has routing optimizations automatically enabled. No configuration required.

Install Clarifai Skills to turn Claude Code, Cursor, or any AI-powered coding assistant into an expert on the Clarifai platform. Read Complete installation guide And see complete Release notes For all updates in 12.3.

subscription To start posting smart order routing forms, or join the community Sedition Here if you have any questions.

(function(d, s, id) {

var js, fjs = d.getElementsByTagName(s)(0);

if (d.getElementById(id)) return;

js = d.createElement(s); js.id = id;

js.src = “//connect.facebook.net/en_GB/sdk.js#xfbml=1&version=v3.0”;

fjs.parentNode.insertBefore(js, fjs);

}(document, ‘script’, ‘facebook-jssdk’));