Your agents are only as good as the knowledge they have access to – and only as secure as the permissions they enforce.

We are launching Anterior cruciate ligament hydration (Access Control List Hydration) to secure knowledge workflows in DataRobot Agent Workforce Platform: A unified framework for ingesting unstructured enterprise content, maintaining access controls on the source system, and enforcing those permissions at query time – so your agents can retrieve the right information for the right user, every time.

Problem: Enterprise knowledge without enterprise security

Every organization building agentic AI hits the same wall. Your agents need access to knowledge locked within SharePoint, Google Drive, Confluence, Jira, Slack, and dozens of other systems. But connecting to these systems is only half the challenge. The trickier problem is ensuring that when an agent retrieves a document to answer a question, it respects the same permissions that govern who can see that document in the source system.

Today, most RAG implementations completely ignore this. Documents are collected, merged, and stored in a vector database without any record of who was or was not supposed to access them. This can lead to a system where a junior analyst’s query turns up board-level financial documents, or where a contractor’s agent retrieves HR files intended only for internal leadership. The challenge is not just how to propagate permissions from data sources throughout the content of the RAG system – these permissions must be continually updated as people are added to or removed from access groups. This is critical to maintaining synchronized controls over who can access different types of source content.

This is not a theoretical risk. It’s why security teams are blocking GenAI rollouts, compliance officers are reluctant to sign on, and promising proxy pilots are halted before reaching production. Enterprise customers have been upfront: Without access control-aware recovery, agent AI cannot bypass sandbox experiences.

Current solutions don’t solve this well. Some can enforce permissions, but only within their own ecosystems. Others support cross-platform connectors but lack native agent workflow integration. Vertical applications are limited to internal search without the possibility of scaling the platform. None of these options give organizations what they actually need: a cross-platform, ACL-aware knowledge layer specifically designed for agentic AI.

What DataRobot provides

DataRobot Secure Knowledge Workflows provide three core, interconnected capabilities in the Agent Workforce Platform for secure knowledge and context management.

1. Enterprise data connectors for unstructured content

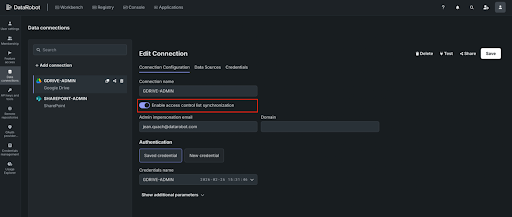

Connect to the systems where your organization’s knowledge actually lives. At launch, we’re providing production-level connectors for SharePoint, Google Drive, Confluence, Jira, OneDrive, and Box – with Slack, GitHub, Salesforce, ServiceNow, Dropbox, Microsoft Teams, Gmail, and Outlook following in subsequent releases.

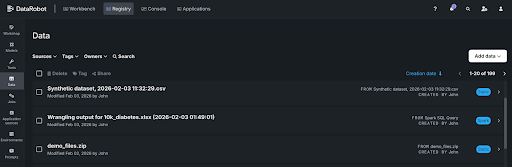

Each connector supports full historical refills for initial ingestion and scheduled incremental synchronizations to keep your vector databases up to date. You can control access and manage connections through APIs or the DataRobot user interface.

These are not lightweight integrations. It’s designed to handle production-level workloads — 100+ GB of unstructured data — with robust error handling, retry, and sync status monitoring.

2. Anterior cruciate ligament hydration and metadata preservation

This is the basic distinction. When DataRobot ingests documents from a source system, it not only extracts the content, it captures and maintains access control metadata (ACLs) that define who can see each document. User permissions, group memberships, role assignments – all of this is propagated to the vector database search so that retrieval is aware of the permissions on the data being retrieved.

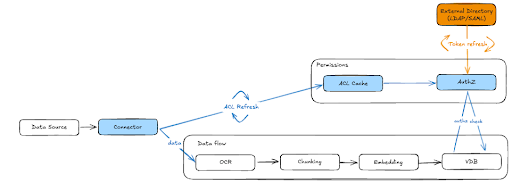

Here’s how it works (shown in Figure 1 below):

- During ingestionDocument-level ACL metadata—including user and group permissions and roles—is extracted from the source system and persists along with the vector content.

- ACLs are stored in a central cache,separate from the vector database itself. This is a critical architectural decision: when permissions change on the source system, we update the ACL cache without re-indexing the entire VDB. Permission changes are propagated to all end consumers automatically. This includes permission for locally uploaded files, which DataRobot respects RBAC.

- Near real time ACL update Keeps the system in sync with source permissions. DataRobot polls and updates ACLs continuously within minutes. When someone’s access to SharePoint is revoked or a Google Drive folder is restructured, these changes are reflected in DataRobot on a scheduled basis – ensuring that your agents never provide outdated permissions.

- External identity solution It maps users and groups from your organization’s directory (via LDAP/SAML) to ACL metadata, so that permission checks are resolved correctly regardless of how identities are represented across different source systems.

3. Enforce dynamic permission at query time

Storing ACLs is necessary but not sufficient. The real work happens at the time of retrieval.

When an agent queries a vector database on behalf of a user, DataRobot’s authorization layer evaluates the stored ACL metadata against the requesting user’s identity, group memberships, and roles – in real time. Only includes that the user is authorized to access are returned. Everything else is filtered out before it reaches the LLM.

This means that two users can ask the same question to the same agent and get different answers – not because the agent is inconsistent, but because it correctly scales the scope of its knowledge to what each user is allowed to see.

For documents ingested without external ACLs (such as files uploaded locally), DataRobot’s internal authorization system (AuthZ) handles access control, ensuring permissions are enforced consistently regardless of how content enters the platform.

How it works: step by step

Step 1: Connect your data sources

Register your organization’s data sources with DataRobot. Authenticate via OAuth, SAML, or service accounts depending on the source system. Configure what you want to accommodate – specific folders, file types, and metadata filters. DataRobot handles the initial population of historical content.

Step 2: Ingest content using ACL metadata

During document ingestion, DataRobot extracts content for segmentation and embedding while simultaneously capturing document-level ACL metadata from the source system. This metadata—including user permissions, group memberships, and role assignments—is stored in a central Access Control List (ACL) cache.

Content flows through a standard RAG pipeline: OCR (if necessary), slicing, embedding, and storage in the vector database of your choice—whether it’s a FAISS-based solution built into DataRobot or an Elastic, Pinecone, or Milvus instance—with ACLs that follow the data throughout the workflow.

Step 3: Mapping external identities

DataRobot resolves user and group information. This mapping ensures that ACL permissions from source systems—which may use different identity representations—can be accurately evaluated against the user making the query.

Group memberships, including external groups such as Google Groups, are resolved and cached to support quick permissions checks at recovery time.

Step 4: Query with Force Permission

When an agent or application queries a vector database, DataRobot’s AuthZ layer intercepts the request and evaluates it against the ACL cache. The system verifies the requesting user’s identity and group memberships against stored permissions for each filter inclusion.

Only approved content is returned to LLM to generate the response. Unauthorized inclusions are silently filtered out — the proxy responds as if the restricted content does not exist, preventing any information leakage.

Step 5: Monitoring, auditing and governance

Every connector change, synchronization event, and ACL modification is logged for auditability. Administrators can track who has connected data sources, what data has been ingested, and what permissions have been applied – providing complete data lineage and compliance traceability.

Permission changes are propagated to source systems through scheduled ACL updates, and all end consumers – across all VDBs built from that source – are automatically updated.

Why this matters to your agents

Secure Knowledge Workflows are changing what is possible with agentic AI in the enterprise.

Agents get the context they need without compromising security. By publishing ACLs, agents get the context information they need to get the job done, while ensuring that the data accessed by agents and end users respects the authentication and authorization privileges maintained on-premises. The agent does not become a backdoor to enterprise information – while still having all the enterprise context needed to do its job.

Security teams can approve production deployments. By enforcing end-to-end source system permissions, the risk of unauthorized data exposure through GenAI is not only mitigated, but eliminated. Each loopback respects the same access limits that govern the source system.

Builders can move faster. Instead of creating custom permission logic for each data source, creators get ACL-aware retrieval out of the box. Connect the source, ingest the content, and the permissions come with it. This removes weeks of custom security engineering from each agent project.

End users can trust the system. When users know that a proxy displays only the information they are authorized to see, adoption accelerates. Trust is not a feature you enable — it is the result of an architecture that enforces permissions by design.

never

Secure knowledge workflows are now available in the DataRobot Agent Workforce Platform. If you’re building agents that need to think about enterprise data — and you need those agents to respect who can see what — this is the capability that makes it possible. Try DataRobot or request a demo.