introduction

Manual QA slows down your releases more than your code. Engineering teams across the US have reached their limit as test cycles cannot keep up with the speed of deployment. According to the World Quality Report 2025, approximately 40% of software delivery delays are directly related to incompetence testing.

Here’s the problem. You can’t scale testing in the same way you scale development.

This is the place Automate AI testing within ADLC Changes the equation. By including the test in Artificial intelligence-based software development life cycle,Teams reduce QA bottlenecks, improve defect detection, and accelerate releases without increasing the number of QA staff. The transformation is not gradual. It’s structural, and it starts with understanding how testing actually evolves within the ADLC.

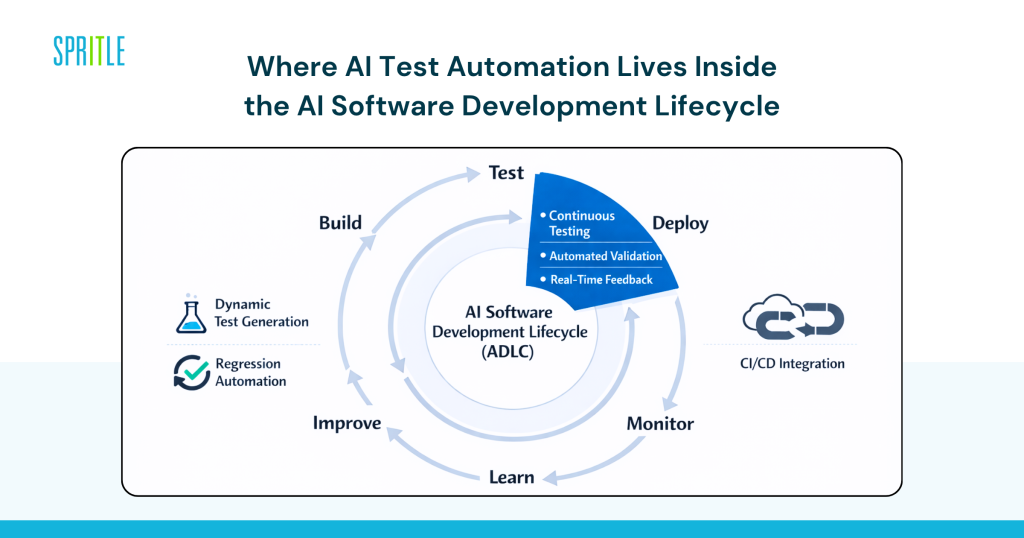

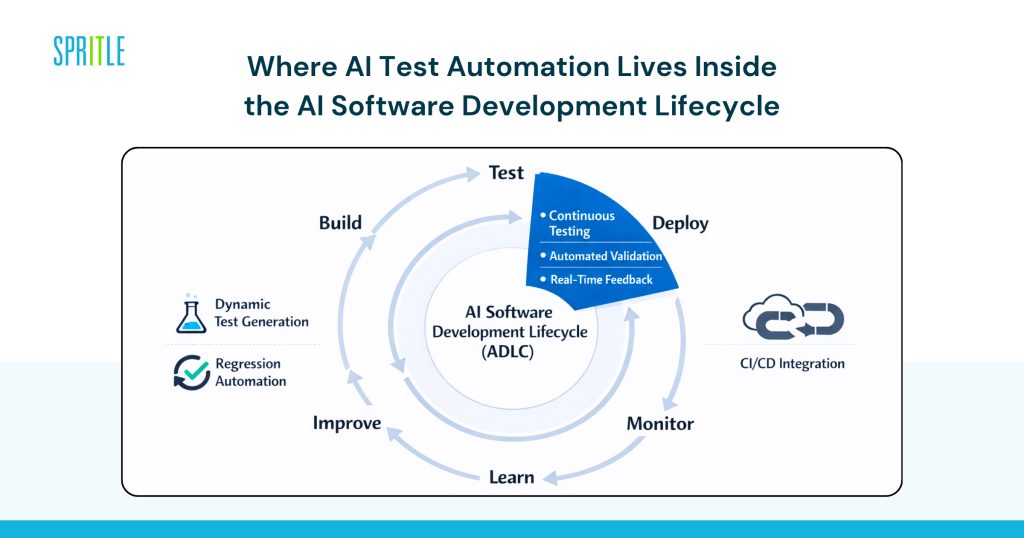

Where AI test automation lives within the AI software development lifecycle

AI test automation is not a layer that is added after development. It is an essential element in Artificial intelligence software development life cycle itself.

AI test automation in ADLC refers to the use of machine learning models and intelligent systems to automatically create, execute, maintain, and optimize test cases across the development lifecycle.

This includes:

- Create a dynamic test based on user behavior

- Automated regression testing

- Real-time validation in CI/CD pipelines

Unlike traditional QA, testing is no longer just a phase. It becomes a continuous system embedded in Artificial intelligence-based software development life cycle.

Why does this change everything?

The QA guide is based on scripts, human effort, and static scenarios.

AI-based testing adapts.

And learn from:

- Production data

- User flows

- Historical flaws

This transformation is what turns testing from a bottleneck into an accelerator.

Why QA bottlenecks are forcing teams to rethink testing

The cost of quality assurance is rising, but the biggest problem is speed.

According to a 2025 Capgemini report, companies using AI-driven testing reduced testing effort by up to 30% while improving release pace.

What most teams miss is this. The Quality Assurance Guide is designed for predictable systems. Modern applications are unpredictable.

Pressure points that you can actually feel

- Regression cycles take days instead of hours

- QA teams spanned across multiple releases

- High cost of hiring skilled QA engineers in the US

- Increased complexity in microservices and APIs

Meanwhile, native AI competitors are shipping updates daily. This gap is rapidly worsening.

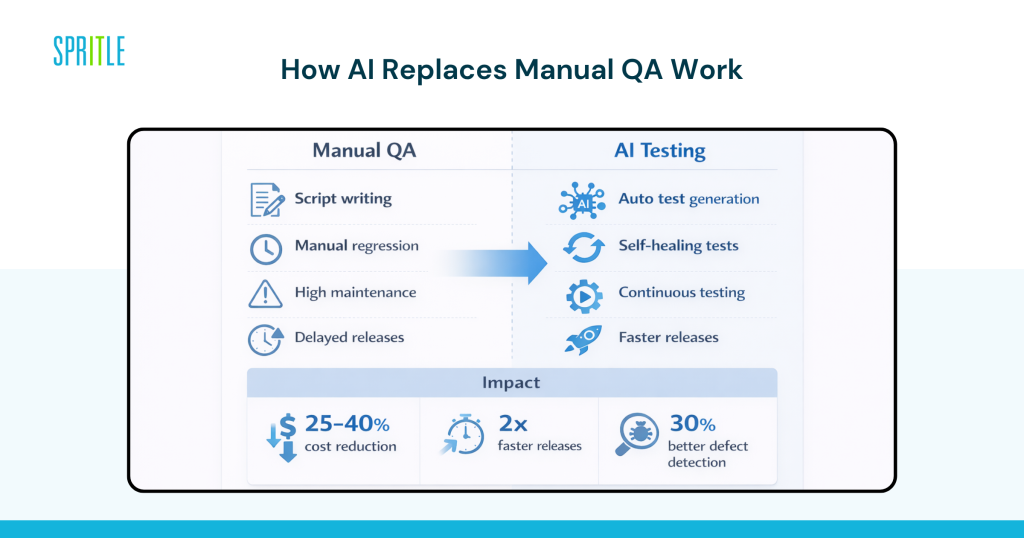

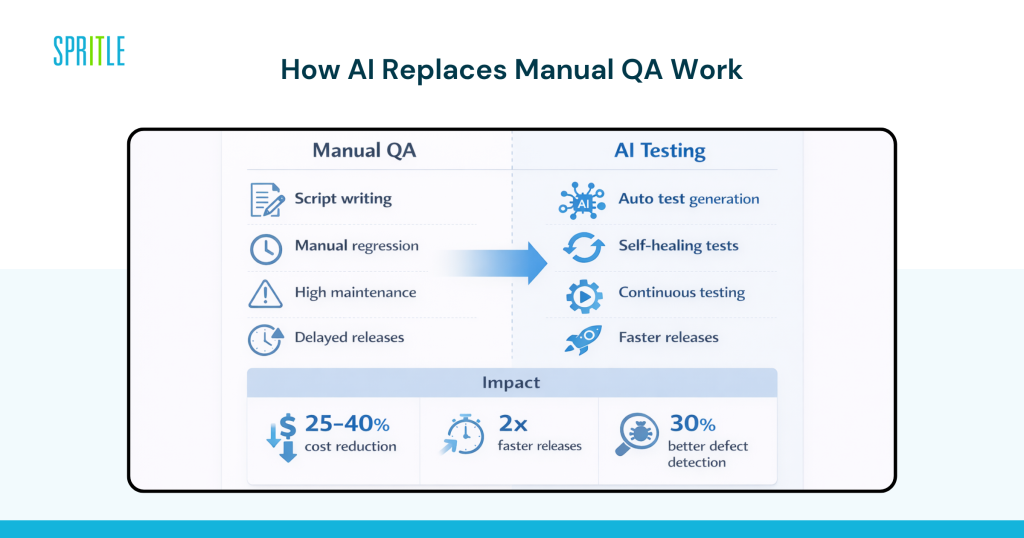

How AI test automation replaces manual work to ensure quality

This is where it gets practical. AI does not completely replace QA teams. It replaces specific types of work that cannot be measured.

Create a smart test instead of writing a script

Tools like Mabl, Testim, and Functionize automatically create test cases by analyzing application behavior.

Instead of writing scripts, your team sets intent.

Artificial intelligence handles:

- UI test generation

- API validation scenarios

- Edge case detection

Gartner estimates that creating AI-based tests can reduce manual scripting effort by up to 50%.

Self-healing tests that reduce maintenance costs

One of the biggest hidden costs in QA is test maintenance.

AI tools solve this problem with self-healing capabilities:

- UI changes are detected automatically

- Update test scripts without manual intervention

- False failures are reduced

This alone can reduce maintenance time by 30 to 40 percent.

Continuous testing within CI/CD with the help of AI

Testing no longer waits for the release cycle.

inside that AI-assisted CI/CD pipelineTests are conducted continuously:

- Every commit triggers a validation

- Defects are detected earlier

- Feedback loops improve future test coverage

This creates Intelligent testing and quality assurance system That evolves with your application.

What this means for cost, speed and engineering efficiency

This is the section that most CTOs care about. Does this really move the needle?

The short answer is yes, but only when implemented within ADLC.

Measurable business impact

Organizations adopting the AI Test Automation Report:

- 25 to 40% reduction in quality assurance costs

- 2x faster firing cycles

- Up to 30% improvement in defect detection rates

Source: World Quality Report 2025

Why is ROI real?

You don’t just save time.

You:

- Reduce manual effort

- Reducing production defects

- Speed up feedback loops

This is how teams start Reducing software development costs using artificial intelligence Without compromising quality.

Real-life examples of testing AI in action

Netflix and ongoing testing at scale

Netflix uses automated and intelligent testing systems as part of its deployment process.

impact:

- Thousands of tests executed per deployment

- Minimal manual intervention to ensure quality

- High system reliability over a large scale

Their approach aligns closely with ADLC principles.

The American fintech company is modernizing its quality assurance

A New York-based fintech company has adopted AI testing tools integrated with CI/CD.

results:

- Regression testing reduced from 48 hours to less than 8 hours

- Fault leakage decreased by 20%

- Verify compliance faster

Chicago’s SaaS platform is scalable without hiring QA

One SaaS company replaced 60% of its manual QA workflow with AI-driven testing.

Results:

- No additional QA hires despite product growth

- Faster race courses

- Improve confidence in release

Risks that need to be planned for well in advance

AI test automation is not just a plug-and-play feature. There are real risks if you handle it incorrectly.

Blind trust in the outputs of artificial intelligence

AI-generated tests still need validation. Without supervision, false positives or missing edge cases can occur.

Poor data leads to poor testing

AI systems rely on historical and behavioral data. Weak data sets limit effectiveness.

Segmentation of the tool across teams

Using multiple separate testing tools creates inefficiencies rather than solves them.

Compliance and security concerns

In regulated industries, AI-generated tests must meet stringent validation standards.

The honest answer is this. AI testing works best when it is part of an organized system Artificial intelligence-based software development life cycleIt is not a stand-alone initiative.

What do highly mature teams do differently with AI testing?

This is where the disconnect occurs between teams experimenting with AI and those working to scale it.

1. They integrate testing into the development process, not after it

Testing is ongoing and is not a final step.

2. They standardize tools and workflows

Consistency between teams ensures scalability.

3. They invest in feedback loops

Every test result goes back to improving future tests.

4. They combine artificial intelligence and human oversight

Artificial intelligence deals with scale. Humans deal with judgment.

5. They partner strategically when needed

This is where many teams scout ADLC Consulting Services Or work with Artificial intelligence software development company To accelerate its adoption.

If you’re trying to build this from scratch, expect a learning curve.

How to start implementing AI test automation without disrupting delivery

You don’t need a complete transformation on the first day. You need a controlled rollout.

- Identify high-impact QA bottlenecks such as regression testing

- Delivering AI testing tools in a limited-scale environment

- Integrate with existing CI/CD pipelines progressively

- Define management for validation and security

- Scaling based on measurable improvements

What separates teams that succeed is not the tool. This is how it is integrated into ADLC.

If you want to move faster, work with experienced people AI development lifecycle partner Or provider Enterprise AI development solutions The path can be shortened significantly.

Frequently asked questions

Q: How does AI test automation fit into the AI-driven software development lifecycle?

A: AI test automation is built directly into the AI-driven software development lifecycle as a continuous process. It automates the process of test creation, execution, and optimization while integrating with CI/CD pipelines for real-time validation.

Q: Will AI test automation completely replace QA engineers?

A: No, it replaces repetitive manual tasks like regression testing and script maintenance. QA engineers focus more on strategy, edge cases, and validation within the AI software development lifecycle.

Q: What kind of return on investment can US companies expect from AI testing?

A: Most organizations see a 25 to 40 percent reduction in QA costs and significantly faster release cycles. The ROI depends on how well AI testing is integrated into the ADLC.

Q: Should we build AI testing capabilities internally or work with a partner?

A: If your team lacks AI experience in the SDLC, working with an AI software development company or ADLC consulting services provider can accelerate results and reduce implementation risks.

conclusion

AI test automation not only improves quality assurance. It redefines how testing works within Artificial intelligence-based software development life cycle. Teams that get it right eliminate bottlenecks, reduce costs, and deliver services faster without compromising quality.

The gap between manual QA testing and AI-driven testing is rapidly widening.

If your team is evaluating how to update the test, that’s right ADLC Services or AI-driven development lifecycle services It can help you move from experimentation to real impact.