The multimodal AI market was valued at $2.51 billion in 2025 and is projected to reach $42.38 billion by 2034, growing at a compound annual growth rate of 36.92%, according to Precedence Research. That growth is not driven by smarter algorithms alone. It is driven by better multimodal AI training data.

Yet most teams underestimate what it actually takes to build this data. They treat it as a labeling job. It is not. It is a coordination challenge: multiple data types collected in sync, annotated with consistent schemas, and aligned across modalities before a model ever sees a single example.

At Shaip, now part of the Ubiquity ecosystem, we work with AI teams building datasets across text, speech, image, video, sensor, and medical imaging modalities. The patterns that separate high-performing multimodal models from expensive failures come down to data quality decisions made early — decisions this guide walks you through.

By the end of this article, you will understand how multimodal models learn, where the leading models in 2026 get their edge, which industries are deploying multimodal AI at scale with verified results, and exactly how to source the data that makes it work.

What Is Multimodal AI Training Data?

Multimodal AI training data is a structured collection of paired or interleaved inputs from two or more data modalities — such as images with text captions, audio recordings with transcripts, or video with synchronized sensor readings — used to train AI models to understand and reason across those modalities together. Unlike unimodal datasets that train models on a single data type, multimodal datasets require cross-modal alignment: each example must convey consistent meaning across all modalities present.

The distinction matters in practice. A text-only model trained on clinical notes learns to predict diagnoses from words. A multimodal model trained on clinical notes and the corresponding imaging data can catch patterns neither modality reveals alone. That combination requires a fundamentally different approach to data collection, annotation, and quality control.

Shaip’s multimodal training data services cover six core modalities:

Unimodal vs. Multimodal at a glance:

The journey from single-mode to multimodal AI represents a significant technological advancement. Early AI systems were highly specialized—image classifiers could identify objects but couldn’t understand associated text descriptions, while natural language processors could analyze sentiment but missed visual cues that provided crucial context.

Understanding what multimodal data is sets the stage for understanding how models actually use it — which is where most teams find the first hard surprises.

How Multimodal AI Models Actually Learn

Every multimodal model runs on the same three-stage pipeline: encode, fuse, decode. What happens at each stage determines what kind of training data you need.

Stage 1: Encoders — Converting Raw Data Into Vectors

Each modality enters through a specialized encoder that converts raw input into a numerical embedding. A vision encoder (typically a convolutional network or Vision Transformer) converts an image into a feature vector. A text encoder, usually transformer-based, does the same for text. An audio encoder processes frequency patterns from speech or sound.

These encoders can be trained from scratch, or initialized from pre-trained models like OpenAI’s CLIP, which learns a shared embedding space for images and text by training on 400 million image-caption pairs. The quality of your training data at this stage determines how well each encoder generalizes to your domain.

Stage 2: Fusion — Where the Model Builds Cross-Modal Understanding

Fusion is where multimodal learning actually happens. The model has to reconcile embeddings from different modalities into a single representation. There are four main strategies:

- Early fusion: Raw inputs are combined before encoding. Simple, but sensitive to noise in any one modality.

- Late fusion: Each modality is encoded separately and combined at the decision layer. More robust, but potentially misses fine-grained cross-modal relationships.

- Hybrid fusion: A mix of both, processing some modalities jointly and others independently.

- Dynamic (adaptive) fusion: The model learns to weight each modality based on input quality at inference time. If audio is noisy, the model down-weights it automatically. This approach, covered in recent work from Encord’s ICLR 2026 analysis, is now considered best practice for production deployments.

(CALLOUT: Cross-modal attention is the mechanism that makes fusion precise. Originally demonstrated in the ViLBERT architecture (Lu et al., 2019), and refined in CLIP and ALIGN, it works by computing attention scores between tokens from different modalities — for example, aligning the word “crack” in a maintenance report with the specific region of an X-ray image where a fracture appears. Training data quality directly determines how accurately these attention relationships form.)

Stage 3: Decoder — Producing Outputs

The decoder generates the model’s output: a text answer, a bounding box, a classification label, or a generated image. For the decoder to be reliable, the fusion layer must have seen enough correctly aligned examples during training to learn stable cross-modal associations.

This has a direct implication for your dataset: misaligned pairs — an audio clip paired with the wrong transcript, or an image captioned with a description of a different scene — corrupt the fusion layer’s learning. One mislabeled example in a paired dataset causes more damage than one mislabeled example in a unimodal one, because it misleads two modalities simultaneously.

Shaip’s data annotation and labeling process includes cross-modal consistency checks at every stage for exactly this reason.

The 2026 Multimodal AI Model Landscape

Which AI models use multimodal training data? Every leading foundation model released since 2023 is either natively multimodal or actively adding modalities. GPT-4o, Gemini 2.5, Claude 3.7 Sonnet, Llama 4 Scout and Maverick, and Phi-4 all process at least two modalities natively. Fine-tuning any of them on domain-specific tasks requires domain-specific multimodal training data — and that data is where your competitive edge lives.

Here is how the 2026 landscape breaks down by modality and training data implication:

Multimodal Training Data by Industry Vertical

Different industries need different modality combinations. Here are five verticals where multimodal AI has moved from pilot to production — with verified public deployments.

1. Healthcare: Combining Imaging, Clinical Notes, and Speech

Google DeepMind’s Med-Gemini (2024) demonstrated what happens when multimodal training data is done right at scale. Published in Nature in 2024 by Saab et al., the research showed that a multimodal model trained on medical images, clinical notes, and patient history significantly outperformed unimodal baselines across 14 medical benchmarks — including radiology report generation and pathology image analysis.

The training data requirements are strict: imaging data must be DICOM-compliant, patient records must be de-identified to HIPAA standards, and speech data from physician dictation must be transcribed with medical vocabulary accuracy. Shaip’s healthcare training data catalog provides de-identified, HIPAA-compliant datasets across CT, X-ray, MRI, physician dictation, and EHR data — built specifically for teams training clinical AI models.

2. Autonomous Vehicles and Robotics: Sensor Fusion at Scale

Tesla’s Full Self-Driving system uses data from eight cameras, ultrasonic sensors, and a forward-facing radar — processing all streams simultaneously to make real-time driving decisions. The training dataset is built from millions of on-road miles with frame-level annotation across every sensor stream.

Waymo and Boston Dynamics (partnering with Google DeepMind on Gemini Robotics, announced at CES 2026) rely on LiDAR + camera + IMU fusion. As Jensen Huang noted at CES 2026, physical AI — robots that combine vision, language, and sensor understanding — represents the next major multimodal frontier.

The common thread: these systems fail when sensor modalities are not synchronized to sub-millisecond precision in the training data. Temporal misalignment between camera frames and LiDAR sweeps creates ghost artifacts that the model learns as real features.

3. Retail and E-Commerce: Visual Search Meets Natural Language

Amazon’s visual search product, StyleSnap, combines image embeddings with text query processing to match a customer’s uploaded photo against catalog items. The training data requires paired image-text examples where the visual and textual descriptions are semantically equivalent — not just keyword-matched.

When product images are annotated with structured attributes (color, material, silhouette, style era) and paired with actual customer search queries, conversion accuracy improves substantially. This is a problem of AI data collection quality, not model architecture.

4. Customer Experience: Speech, Text, and Sentiment Together

Contact center AI systems are moving from text-only chatbots to multimodal models that process the spoken word, the transcript, and the emotional tone in parallel. A customer saying “this is fine” in a flat, low-energy voice is not the same as saying it with rising inflection. Text-only systems miss the distinction entirely.

Building effective training data for this use case requires audio recordings with corresponding transcripts, emotion labels, intent labels, and contextual metadata — all annotated consistently. The annotation complexity is roughly three times that of text-only intent classification.

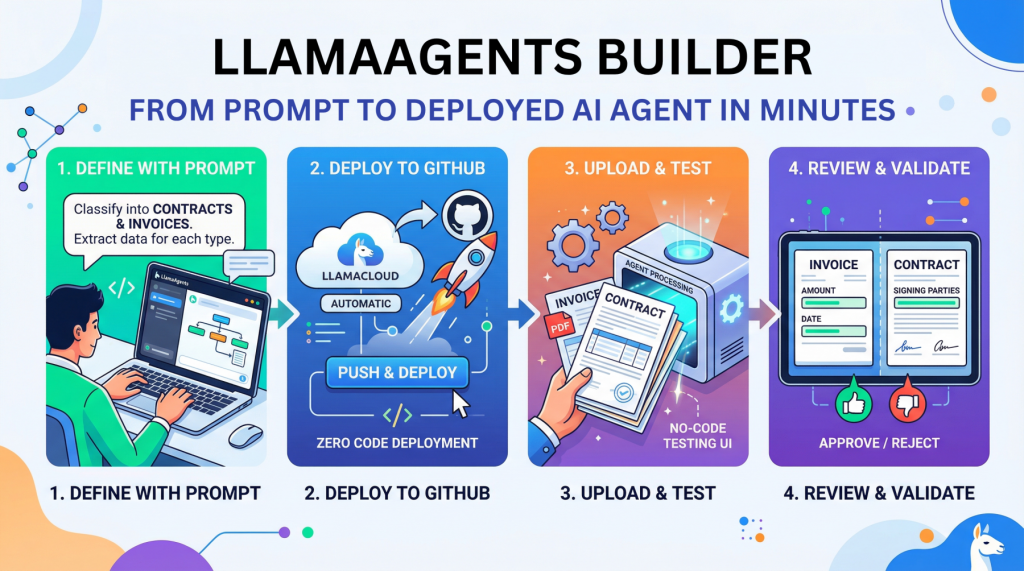

5. Document AI and Enterprise: The Fastest-Growing Vertical in 2026

Document AI is the most underreported multimodal use case in most published guides, and it is the fastest-growing enterprise deployment category. It combines PDF layout, embedded images, OCR text, and structured fields to automate invoice processing, contract review, mortgage underwriting, and regulatory compliance.

Microsoft Azure Document Intelligence and AWS Textract are the most widely deployed platforms — but both require domain-specific fine-tuning to perform reliably on non-standard document layouts. The training data for this use case combines scanned documents (image), extracted text (OCR), structural annotations (bounding boxes for fields), and semantic labels (this field is “invoice total”, not “line item subtotal”).

Shaip’s computer vision data catalog includes document image datasets annotated for form parsing and layout understanding across financial, legal, and healthcare document types.

Key Challenges in Multimodal AI Training Data

Data scarcity and imbalance

High-quality aligned multimodal data is expensive to collect and annotate. The scarcity is not just about total volume. It is about the lack of balanced, representative paired examples for the exact business task. Recent benchmarking work shows multimodal imbalance is now a recognized subfield because dominant modalities can suppress signal from weaker ones.

Alignment and synchronization

Cross-modal alignment is still one of the core engineering bottlenecks. In video, audio must match the correct frame range. In document AI, layout regions must map correctly to text and labels. In healthcare, imaging must line up with reports and structured records. Surveys on multimodal alignment and fusion continue to highlight alignment as a central challenge.

Missing or imperfect modalities

Real-world enterprise systems rarely get complete inputs every time. Sensors fail. Calls have noisy audio. Videos may lack transcripts. Recent survey work on imperfect data conditions shows missing, corrupted, and poorly aligned modalities remain a practical limit on real-world performance.

Bias and fairness across modalities

Bias does not disappear in multimodal systems. It compounds. A 2024 survey on fairness and bias in multimodal AI notes that bias research in large multimodal models remains less mature than bias research in LLMs, even as real-world use expands.

How multimodal AI training data works

A strong multimodal pipeline usually includes five layers:

1. Data Collection

Gather raw assets across the modalities relevant to the use case, such as image-text, audio-text, video-audio-text, or document-image-text. Large open efforts are growing quickly: Encord’s E-MM1 describes 107 million groups across five modalities, while NVIDIA recently highlighted a 1,700-hour open-source multimodal driving dataset for physical AI.

2. Alignment

This is the hard part. Files must correspond at the right object, time, or document level. Alignment and fusion remain major technical challenges in multimodal machine learning, and poor alignment degrades both training quality and downstream retrieval.

3. Annotation

Annotation must capture not just labels inside one modality, but relationships across modalities:

- image—caption consistency

- speaker-to-transcript mapping

- frame-to-event timestamps

- document-layout plus extracted text

- cross-modal instructions and expected outputs

4. Quality Control

Quality checks must validate synchronization, completeness, rights, language accuracy, and label consistency across modalities. New work on multimodal data quality classification shows that semi-synthetic methods are already being used to curate higher-quality multimodal corpora at scale.

5. Evaluation

Production teams should evaluate:

- Cross-modal retrieval accuracy

- grounding quality

- hallucination rate

- robustness to missing modalities

- fairness across demographic groups and contexts

Multimodal AI Training Data: Key Quality Requirements

How Shaip Supports Multimodal AI Training Data at Scale

Shaip provides end-to-end multimodal data services — from custom collection and annotation to off-the-shelf licensed datasets — supporting enterprise AI teams across healthcare, technology, and eCommerce. Our Generative AI Platform handles multimodal annotation workflows, fine-tuning data preparation, and RLHF pipelines across text, speech, image, video, and medical imaging modalities.

Key capabilities include:

- Multimodal dataset annotation across 65+ languages for speech and text modalities

- Medical data catalog including physician dictation audio, transcribed records, X-ray and CT scan datasets, and EHR-structured data

- Custom data collection services for aligned audio-visual, video-text, and document-image paired datasets

- RLHF and human feedback pipelines for fine-tuning multimodal foundation models

- Compliance-first workflows with de-identification, consent management, and full data lineage documentation

For enterprises building multimodal AI at scale, partnering with a specialized data provider accelerates development timelines and ensures the annotation quality that multimodal fusion layers require. Explore Shaip’s multimodal AI training data solutions or contact our team to discuss your use case.